The Operator’s Audit: Evaluating AI Video Pipelines for Sustainable Production

The shift from experimental prompting to a structured production pipeline is the moment an indie creator moves from hobbyist to operator. In the early days of generative media, the novelty of seeing a static image move was enough to justify the friction of disparate tools and unpredictable outputs. Today, that novelty has faded, replaced by the need for reliability, temporal coherence, and cost-efficiency.

For creators and small teams, the challenge is no longer finding an AI tool; it is auditing which tools fit into a repeatable workflow without introducing more technical debt than they solve. Establishing a sustainable production stack requires a cold-eyed evaluation of what a specific AI Video Generator can actually deliver versus what the marketing copy promises. This audit is not about finding the most "powerful" model, but the one that aligns with your specific constraints of time, budget, and visual fidelity.

Defining the Stability Threshold

The primary friction point in any video workflow is the "stability threshold." This refers to the model's ability to maintain character consistency and structural integrity over the duration of a clip. When evaluating a new tool, the first test should not be a complex cinematic prompt, but a series of basic tests designed to break the physics of the model.

Operators should look for how a model handles micro-movements eye blinks, hand gestures, and the way cloth interacts with a body. Many systems look impressive in wide-angle landscape shots where the motion is a simple pan or zoom, but they fail when asked to simulate weight or physics-driven interaction. If your production requires character-driven storytelling, a model that cannot hold a character’s facial features across three different camera angles is a liability.

It is important to acknowledge a significant limitation here: currently, even the most advanced AI Video Generator models struggle with precise anatomical articulation. Expecting a model to perfectly replicate a complex piano performance or a high-speed athletic maneuver without significant artifacting is, at this stage, unrealistic. Operators must build their workflows around these "no-go" zones or plan for heavy post-production masking.

The Image-to-Video vs. Text-to-Video Dilemma

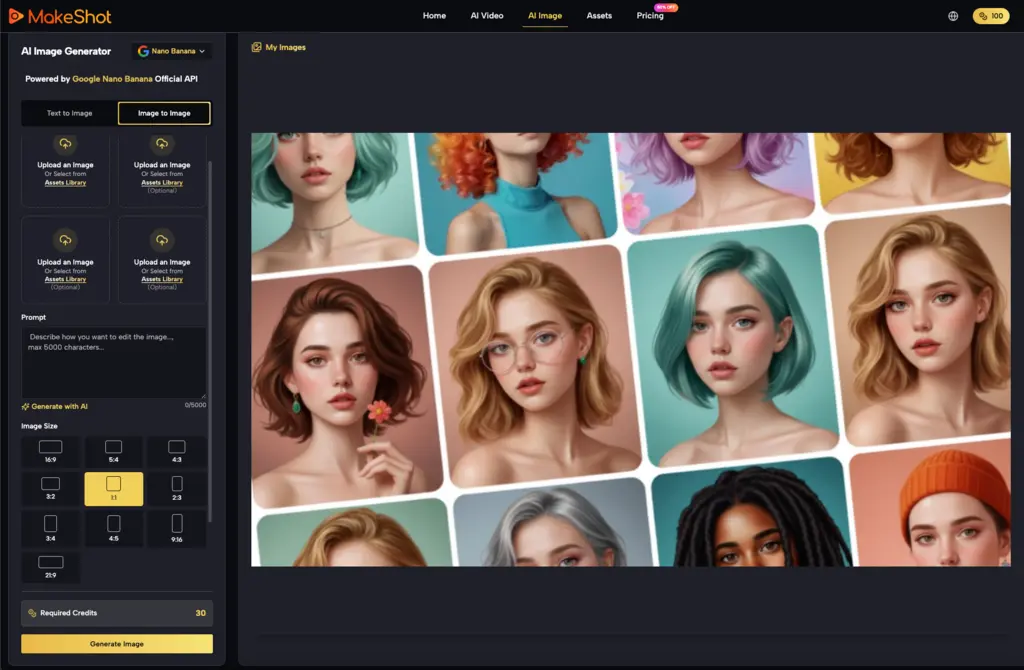

A critical part of the audit is determining your entry point. Text-to-video (T2V) offers the highest level of creative freedom but the lowest level of structural control. For most professional-grade outputs, the "Image-to-Video" (I2V) workflow is the preferred path. By generating a high-quality static frame first using models like Flux or Midjourney you establish a visual anchor. The AI Video Generator then only has to solve the problem of motion, rather than the simultaneous problems of composition, lighting, and movement.

When evaluating an I2V pipeline, look for "motion brush" or "area-specific" controls. A tool that treats the entire frame as a single animated layer is often less useful than one that allows you to specify which elements should remain static. If you are producing a product shot, you want the product to remain sharp while the background bokeh shifts. Without these controls, the tool is often a black box, forcing you to roll the dice on every generation.

Heterogeneous Model Strategy

No single model wins every category. A robust operator-led workflow often involves using multiple models for different types of shots. For example, Google’s Veo might excel at cinematic lighting, while a model like Kling or Sora might handle fluid character motion more effectively.

Using a platform that aggregates these models into a single interface simplifies the stack. Instead of managing five different subscriptions and disparate prompt formats, creators can test a single prompt across several engines. This comparative approach is essential during the pre-production phase. If Model A produces better textures for a sci-fi environment but Model B handles the human elements better, your pipeline should be flexible enough to accommodate both, stitching them together in a traditional NLE (Non-Linear Editor).

Assessing Latency and Iteration Speed

In a production environment, "time to first frame" and "time to final render" are more important than theoretical peak quality. If a high-end model takes fifteen minutes to generate five seconds of video, the iteration loop becomes too slow for rapid prototyping.

Indie makers should evaluate the "quality-to-latency" ratio. There are moments where a faster, lower-resolution preview model is more valuable for blocking out a scene than a slow, high-fidelity one. We must remain uncertain about when "real-time" generative video will truly arrive for the average consumer. While some local deployments show promise, the cloud-based infrastructure required for high-resolution temporal consistency still imposes a significant delay that disrupts the creative flow. This latency is a structural bottleneck that operators must account for by batching their generations rather than waiting for single clips to finish.

Technical Constraints and Export Flexibility

An often-overlooked part of the audit is the technical output format. A tool might generate beautiful visuals but export them in a proprietary format or with baked-in watermarks that make professional use impossible.

The following technical specs should be verified:

-

Aspect Ratio Support: Does the AI Video Generator support 9:16, 16:9, and 4:3 natively, or does it crop and stretch?

-

Resolution and Upscaling: Does it offer built-in temporal upscaling, or will you need a third-party tool like Topaz to make the footage usable on a 4K timeline?

-

Frame Rate Consistency: Variable frame rates can cause sync issues in professional editing suites. A tool that outputs a constant 24fps or 30fps is inherently more valuable.

The Economic Feasibility of the Workflow

Generative video is computationally expensive. Operators need to calculate the "cost per usable second." If a model has a 10% success rate meaning you have to generate ten clips to get one that isn't riddled with hallucinations your actual cost is ten times the advertised credit price.

A sustainable workflow favors models with high predictability over those that occasionally produce "miracle" shots but usually fail. For indie makers, credit management is as much a part of the job as art direction. Choosing a tool that offers a transparent pricing structure or a "Pro" tier with unlimited generations can prevent the project-killing anxiety of watching a budget disappear into failed renders.

Physics, Weight, and Motion Logic

One of the hardest things for an AI Video Generator to master is the concept of weight. When a character in an AI-generated clip walks, do their feet make solid contact with the ground, or do they slide across the floor? When an object falls, does it accelerate naturally?

Evaluating these factors helps you decide where to use AI and where to use stock footage or traditional 3D animation. AI excels at organic, chaotic motion smoke, water, fire, and shifting clouds. It often fails at mechanical motion gears turning, cars driving with proper wheel rotation, or a character picking up a specific object. A production audit should identify these weaknesses early. If your script involves a character precisely threading a needle, you are likely setting yourself up for failure if you rely solely on generative video.

Conclusion: The Operator’s Mindset

Adopting an AI video workflow is not a matter of clicking a "generate" button and receiving a finished film. It is an iterative process of evaluation, constraint management, and technical auditing. The goal of the operator is to reduce the "shimmer" and randomness of AI and replace it with a controlled, repeatable output.

By focusing on the stability threshold, leveraging I2V workflows, and maintaining a heterogeneous model strategy, indie makers can build pipelines that are both creatively expressive and commercially viable. The tools will continue to evolve, but the fundamental need to audit their reliability remains the same. Whether you are using a specialized AI Video Generator for a single shot or an entire sequence, the success of the project depends on your ability to see the tool for what it is: a powerful, yet limited, partner in the creative process. Building a sustainable production isn't about chasing the latest model; it's about mastering the one that works for your specific needs today.

Related Blogs

No related blogs found.