Escaping The Uncanny Valley With Seedance 2.0 High Fidelity Simulation

For the past several years, generative video has existed in a strange limbo known as the "Uncanny Valley." We have seen technology capable of producing recognizable human forms and landscapes, yet something was always slightly off. The movement was too fluid, the eyes too dead, or the physics simply wrong. This disconnect triggered an instinctive rejection in the viewer, making AI video interesting as a novelty but useless for emotional storytelling. The release of Seedance 2.0 on February 12, 2026, represents a significant technical leap across this chasm. By combining a new high-resolution VAE architecture with a physics-aware diffusion model, ByteDance has created a tool that prioritizes sensory cohesion over random generation, offering creators a way to produce video that feels grounded in reality.

Analyzing The Architecture Of Sensory Immersion

The primary failure point of earlier video models was their inability to maintain "object permanence" and physical consistency. A car driving down a street might suddenly slide sideways like a hovercraft, or a glass of water might behave like jelly. In my observation of the Seedance 2.0 framework, the integration of the Diffusion Transformer (DiT) model fundamentally changes how these sequences are calculated. Instead of predicting pixels based on visual patterns alone, the model appears to simulate the underlying physical properties of the scene.

Grounding Visuals Through Synchronized Acoustic Feedback

Visuals alone are rarely enough to sell reality. The brain expects to hear the world it sees. The introduction of "Native Audio" synthesis in this model is a critical factor in breaking the uncanny valley. When the neural network generates a visual event—such as a heavy door slamming—it simultaneously generates the corresponding acoustic waveform.

Understanding The Psychoacoustic Impact Of Native Sound

This synchronization does more than save time; it triggers a stronger belief response in the viewer. If the sound of footsteps on gravel perfectly matches the visual cadence of the walk cycle, the brain accepts the scene as "real" much faster. This multimodal approach—processing light and sound as a unified data stream—creates a layer of immersion that silent, purely visual models simply cannot achieve.

Decoding Director Intent With Advanced Language Models

Another major hurdle has been the communication gap between human intent and machine output. The integration of the Qwen2.5 language model allows Seedance 2.0 to interpret the nuance of emotional and atmospheric prompts. It understands the difference between "sad lighting" and "dramatic lighting," translating these abstract concepts into specific color grading and shadow diffusion settings. This allows creators to direct the mood of the scene, not just the content.

Establishing A Professional Pipeline For Realistic Generation

While the underlying math involves complex tensor calculations, the user interface is designed to abstract this complexity into a linear, four-stage workflow. This process mirrors the logic of a traditional post-production house but compresses the timeline from weeks to minutes.

Crafting The Scene With Precision Prompting

The workflow begins with "Describe Vision." This is where the creator defines the laws of the simulation. The system encourages detailed prompts that specify texture, lighting (e.g., "volumetric fog," "subsurface scattering"), and camera behavior. The inclusion of Image-to-Video capabilities allows for the use of high-fidelity reference images, ensuring that the texture and material properties of the subject are locked in before motion is applied.

Defining The Technical Container For The Simulation

The second step is "Configure Parameters." Here, the user sets the resolution and aspect ratio constraints. The model supports native 1080p generation, which is essential for displaying fine details like skin texture or fabric weave—elements that often blur in lower-resolution models. Users can select aspect ratios from 16:9 for cinematic output to 9:16 for mobile, and define the duration. The architecture supports sequencing 5-12 second clips into longer, coherent narratives up to 60 seconds.

Rendering Physics And Audio In Real Time

The third phase is "AI Processing." This is the computational heavy lifting. The model calculates the light transport and object deformation while simultaneously synthesizing the environmental audio. Unlike simpler models that might "hallucinate" motion, this phase focuses on maintaining temporal consistency, ensuring that gravity and momentum act predictably on the objects in the frame.

Finalizing The Asset For Broadcast Integration

The final step is "Export & Share." The system outputs a high-bitrate MP4 file, free of watermarks. Because the audio and video are generated as a single, synchronized stream, there is no need for manual alignment in post-production. The file is ready to be graded or edited immediately.

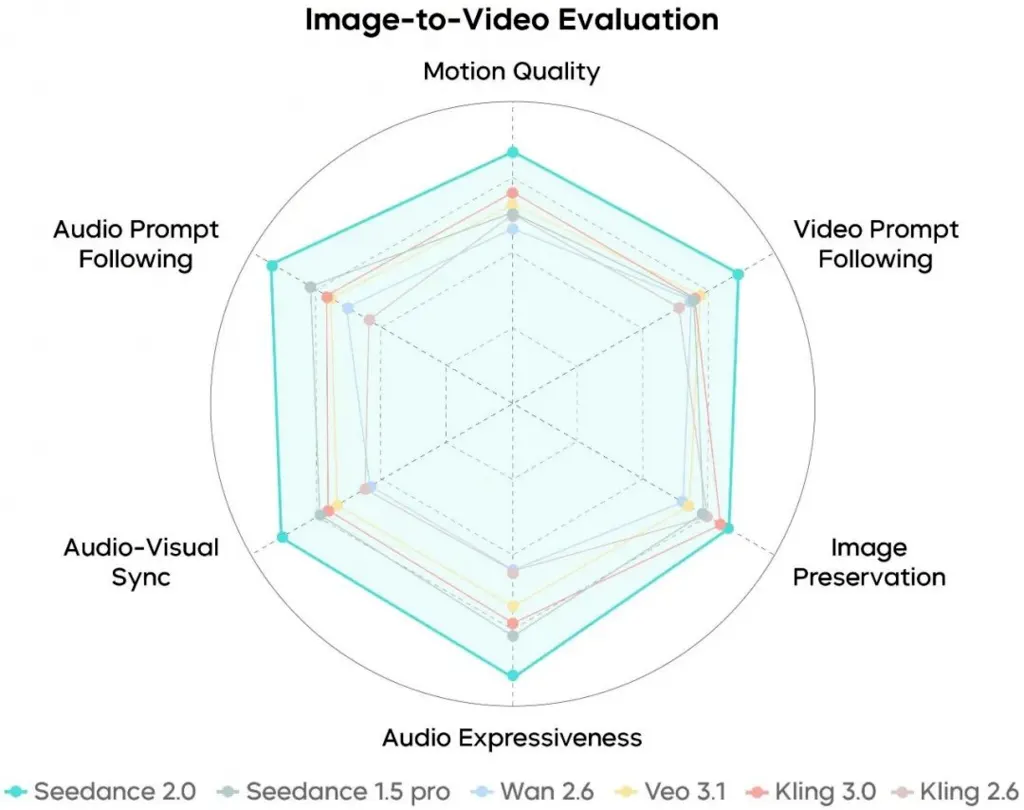

Benchmarking The Fidelity Of Generative Simulation

To understand the practical difference between "generating a video" and "simulating a scene," it is helpful to compare the output characteristics of Seedance 2.0 against the previous generation of AI video tools.

|

Immersion Factor |

Standard Generative Video |

Seedance 2.0 Simulation |

|

Physics Fidelity |

"Dream logic"; objects float/morph. |

Newtonian approximation; mass/gravity respected. |

|

Audio Presence |

Silent; visual disconnect. |

Native, frame-accurate environmental audio. |

|

Texture Quality |

Often blurry/smudged in motion. |

1080p sharpness with temporal stability. |

|

Narrative Flow |

Disjointed loops; character drift. |

Coherent multi-shot sequences (up to 60s). |

|

Lighting Logic |

Inconsistent shadow placement. |

Ray-tracing style light consistency. |

Redefining The Emotional Potential Of AI Media

The shift illustrated in the table above moves AI video from a tool for "content filling" to a tool for "storytelling." When a creator can rely on the physics of a tear falling or the sound of a sigh being accurate, they can begin to use these tools to evoke genuine emotion.

Navigating The Limits Of Current Simulation Technology

It is important to maintain a balanced perspective. While Seedance 2.0 significantly reduces the "uncanny" effect, it does not eliminate it entirely. Complex human emotions and micro-expressions are still the hardest hurdle for any AI, and there will be moments where a smile feels artificial or a blink looks mechanical. Furthermore, the generation of high-fidelity, audio-synced content is computationally expensive, meaning users must be patient with processing times.

Related Blogs

No related blogs found.