Empowering Creative Workflows Through Advanced Generative Motion Synthesis Technology

The demand for high-quality video content has never been greater, yet the barriers to entry remain significant for many independent creators and small businesses. Traditional video production often involves a steep learning curve, expensive equipment, and a significant time investment that can stall the creative process. This friction often results in a reliance on static posts, which frequently go unnoticed in crowded social feeds, leading to a loss of potential audience connection and brand growth. To mitigate these obstacles, Image to Video AI provides an innovative solution that democratizes the creation of cinematic motion. By leveraging advanced generative models, the platform allows users to transform existing photo assets into dynamic five-second videos, effectively streamlining the content pipeline and enhancing the professional quality of visual outputs.

The Democratization Of High End Visual Effects For Modern Creators

The shift toward AI-driven video synthesis marks a turning point in visual media. Previously, creating a realistic animation from a single photo required a deep understanding of 3D modeling and projection mapping. Based on my observations, the current generation of AI tools has moved these capabilities into the hands of non-technical users. This democratization is not merely about speed; it is about allowing the creative intent to remain the primary driver of the work, rather than the technical constraints of the software.

How Latent Consistency Models Accelerate Traditional Production Timelines

At the heart of this technology are latent consistency models and transformer-based architectures. Unlike older methods that worked directly on high-resolution pixels, these models operate in a compressed mathematical space. This allows the AI to calculate complex motions and lighting changes much faster than traditional rendering engines. When applied to an image, the system identifies key landmarks—such as facial features or horizon lines—and maintains their consistency across every frame of the generated video.

The Psychological Resonance Of Personalized AI Generated Video Content

There is a unique emotional impact when a still memory is brought to life. For personal projects, such as animating old family photographs, the technology provides a sense of presence that static images lack. In a marketing context, this translates to a higher level of trust and relatability. When a product photo subtly moves, it feels more tangible and real to the consumer, which can positively influence purchasing decisions.

Reducing Production Overheads In Competitive Digital Marketing Ecosystems

For businesses, the primary value proposition lies in cost reduction. Instead of organizing a full-day shoot for a simple social media ad, a marketer can take a high-quality product photo and generate multiple video variations in under an hour. In my testing, I have found that using a clear, well-lit source image significantly reduces the likelihood of visual glitches, ensuring a cleaner final product that is ready for immediate deployment.

Optimizing The Interaction Between Textual Prompts And Visual Output

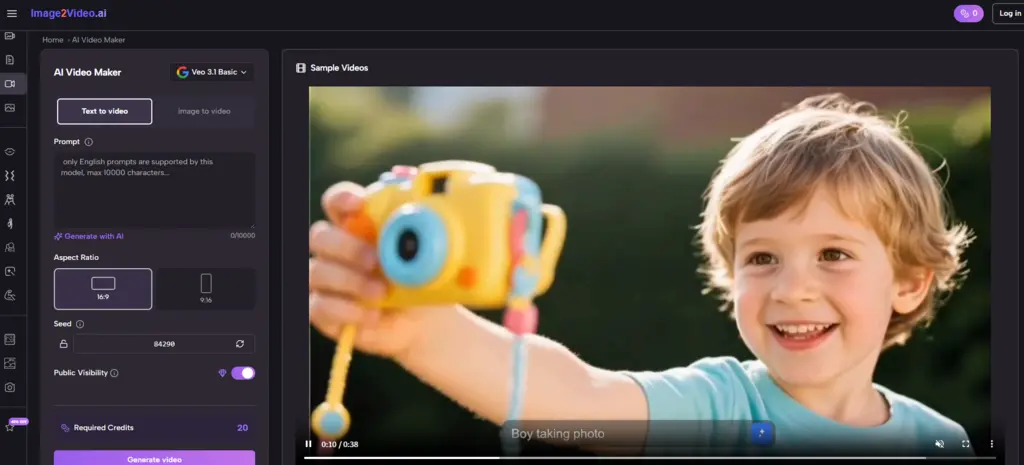

The success of the generation process is heavily predicated on the quality of the interaction between the user and the machine. Effective communication with the AI requires a balance of descriptive detail and conceptual clarity.

-

Upload High Fidelity Assets: Starting with a 4K or high-definition JPEG/PNG is recommended. The AI uses the details in the original file to construct the textures for the new frames.

-

Draft Precise Motion Prompts: Instead of saying "make it move," a user should specify "the person waves their hand slowly while smiling." Precise verbs and adverbs help the model narrow down the motion possibilities.

-

Monitor The Processing Phase: The typical wait time of five minutes allows the system to perform deep-layer synthesis. In my observations, the system seems more stable when processing one high-quality request at a time.

-

Export And Iteration: The final MP4 is a 5-second loopable or standalone clip. If the result is not quite right, adjusting the prompt and regenerating is a standard part of the professional workflow.

Harnessing Cinematic Motion Controls For Enhanced Storytelling Depth

A standout feature for professional users is the inclusion of directional camera controls. The ability to simulate a slow zoom-in or a cinematic tilt adds a layer of intentionality to the video. This is particularly useful for E-commerce, where a "dollied" shot can emphasize specific product features. In my experience, these camera movements appear most fluid when they are used to complement the natural lines of the source image.

Evaluating Performance Metrics Across Various Professional Creative Environments

The utility of generative motion tools varies depending on the specific application. The following table highlights how different sectors can leverage these features.

|

Application Area |

Key Use Case |

Expected Benefit |

Motion Type Recommendation |

|

E-commerce |

Product Showcases |

Higher Conversion Rates |

360 Degree Rotation / Zoom |

|

Social Media |

Influencer Content |

Increased Reach |

AI Dance / Character Action |

|

Education |

Visual Tutorials |

Better Information Retention |

Animated Infographics |

|

Personal Heritage |

Animate Old Photos |

Emotional Connection |

Subtle Facial Expressions |

|

Marketing |

Video Ad Creation |

Lower Production Costs |

Dynamic Background Motion |

Addressing The Logical Dependencies Of Prompt Based Animation Generation

It is vital to understand that AI video generation is a probabilistic process, not a deterministic one. This means that providing the same image and prompt twice may yield slightly different results. The technology currently specializes in short-form content, and users should be aware that the five-second limit is a fixed constraint. Additionally, while the system is highly capable, it may occasionally struggle with very fine details, such as complex text or intricate mechanical parts, which might appear slightly blurred during movement. These factors suggest that for high-stakes projects, the "generate and refine" approach is the most effective strategy.

Building Sustainable Content Strategies With Next Generation AI Tools

The integration of Image to Video AI into a standard workflow represents a shift toward more agile and responsive content creation. By removing the traditional bottlenecks of filming and editing, creators can stay ahead of trends and maintain a consistent presence across multiple platforms. The potential of this technology lies in its ability to take the static past and propel it into a dynamic future, ensuring that every image has the opportunity to tell a much larger story.

Related Blogs

No related blogs found.